Mark Gurman delivered more Siri news this week, and I’m left with the same feeling I’ve had for over a year now: equal parts hope and frustration.

Here’s the picture as it currently stands. Apple is planning two separate Siri overhauls, releasing months apart.

The Spring Update: iOS 26.4

The first update arrives with iOS 26.4, expected around March or April. This is the non-chatbot version built on a custom Google Gemini model running on Apple’s Private Cloud Compute servers. The goal here seems straightforward: finally cash all those checks Apple wrote at WWDC 2024.

Remember those promises? Siri that understands personal context. Siri that can find the book recommendation your mom texted you. Siri that works across apps instead of being confined to one at a time. Features that were supposed to ship with iOS 18, then got pushed to “later,” then pushed again to 2026.

The Fall Overhaul: iOS 27

Then, just a few months later at WWDC 2026, Apple plans to announce an entirely different approach. This one is codenamed “Campos,” and it’s a full chatbot experience. Think Claude or ChatGPT, but baked directly into your iPhone, iPad, and Mac. Voice and text inputs. Persistent conversations you can return to. The works.

Craig Federighi has previously expressed skepticism about chatbot interfaces, preferring AI that’s “integrated into everything you do, not a bolt-on chatbot on the side.” But competitive pressure from OpenAI and others seems to have changed the calculus.

Why Two Versions?

This is where I get frustrated. Releasing two fundamentally different versions of Siri months apart doesn’t inspire confidence. The first version sounds like something they cobbled together just to say they kept their promises. I’d almost prefer they skipped it entirely and focused all their energy on the chatbot.

Why This Matters

I’ve been critical of Siri over the last decade. Every year Apple makes promises it can’t keep. Every WWDC brings demos of features that arrive late, broken, or not at all.

And yet I continue to believe that a smart model on our Apple devices, with access to our local data, where everything stays local and private or runs through Private Cloud Compute, could be one of the best implementations of AI we’ve seen.

Think about who this could help. I spent 30 years practicing law. I know firsthand how many professionals are locked out of these AI tools because the privacy story isn’t good enough. Apple could change that.

And for the rest of us? We’re not particularly excited about sharing our personal information with giant AI companies either. A truly private assistant that actually knows your life without selling it to advertisers? That’s the dream.

Apple is uniquely positioned to deliver this. They have the hardware. They have the ecosystem integration. They have the privacy infrastructure. They have over 2 billion devices that could benefit.

But they have yet to prove they can actually ship it.

Where I Am Right Now

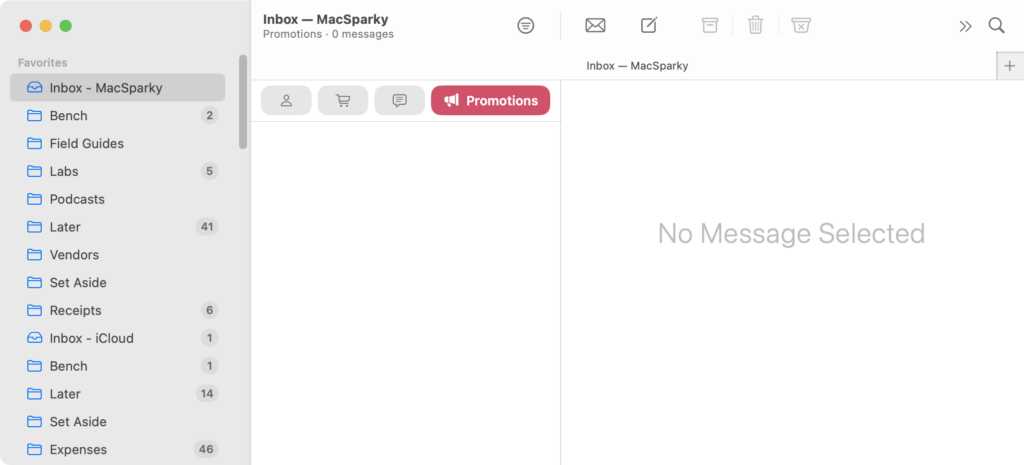

I currently use Siri where I can, but that’s very limited. I get far more use out of Claude than I do Siri at this point. (Claude’s recent Cowork feature is shockingly impressive.) That’s not where I want to be. I want the assistant built into my devices to be the one I reach for first.

The Bottom Line

All of this feels like it’s coming to a boiling point. We’ve all been patient with Apple for years now. It’s time for them to prove whether or not they can pull this off.

Let’s hope that in six months, Apple has finally answered the call.